What the iPhone 12 Means for App Development

We were only just covering what was announced during Apple’s September event, and now they’ve announced even more new products and advancements. This event is more focused on the developments being made to Apple’s iPhone line and their Alexa dot equivalent, the HomePod Mini. As developers, we believe it is essential to understand how new devices and technology will affect the software that runs on them. So, we decided to pick out some of the more pertinent information and explain what’s exciting about developing apps for iPhone 12.

In iPhone 12 Pro Max, we’ve been able to create our best camera ever.

Andrew Fernandez, Manager, Camera Systems Engineering

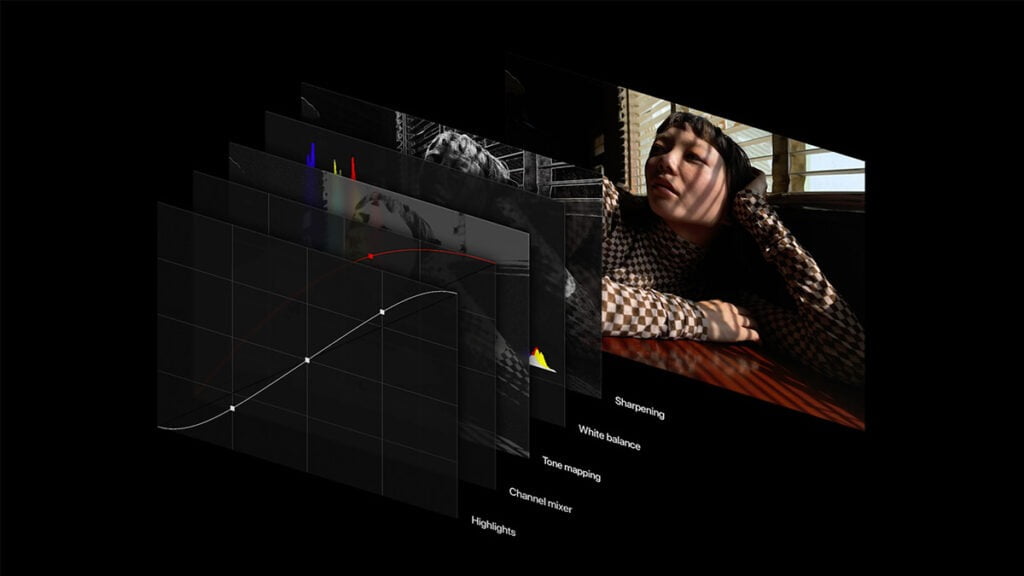

There was quite a bit of fluff during apple’s October event on the 13th, but really the iPhone 12 line and its cameras stole the show. With the iPhone 12 and 12 Pro introductions, we see some significant advancements in the photographic level and editing ability. It’s the first phone to record and edit Dolby Vision with HDR. This is telling in the sense that with the new A14Bionic chip and the triple camera array at the rear of the phone, a whole new door is open for photographers and developers looking to leverage the new iPhones creative capabilities.

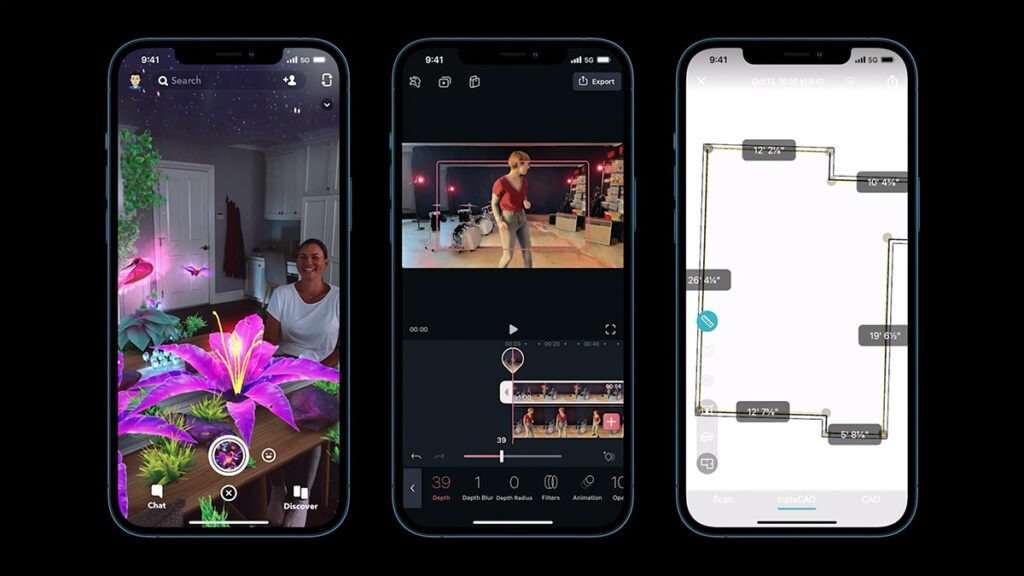

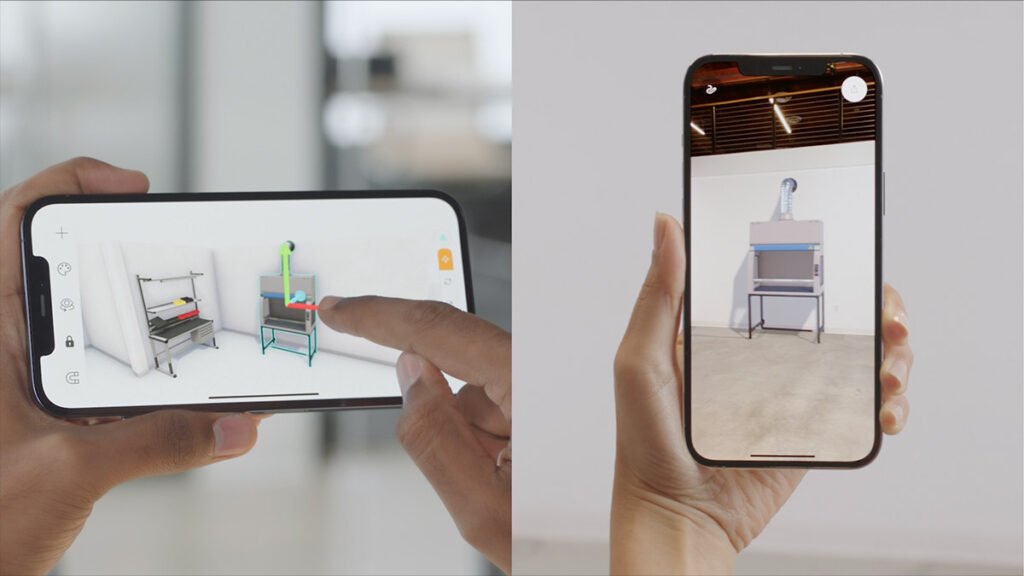

With the third telephoto lens, they have also enhanced their LIDAR sensor capabilities. This depth perception and broad market access will bring new Augmented Reality applications to the forefront. With the LIDAR sensor, it allows for smoother AR object placement in the real world. These placements will also be more fixed to a physical location as more information is being gathered about the surroundings allowing for a more accurate view of a space.

The LIDAR sensor in the iPhone 12 also produces a 6x increase in autofocus speed in low light settings and can make better night mode portraits. This is not only great for urban photography but can be used in numerous ways for enterprise-level applications that leverage a device’s camera. For instance, a field service employee that previously could not show the inner workings of a complex machine via a help application may now be able to bring out more detail and display more accurate AR objects to aid in the repair process.

The limit to use cases and opportunities when it comes to mobile applications is endless. In terms of this new iPhone with LIDAR sensor functions you could even create an app:

That places AR objects within a dimly lit room for Halloween decorations

That points out constellations in a more visible setting

That identifies landmarks and provides walking directions in a city

That scans a crawl space or attic for support beams and uneven surfaces

To survey a buildings inner dimensions and room scale for machine placement

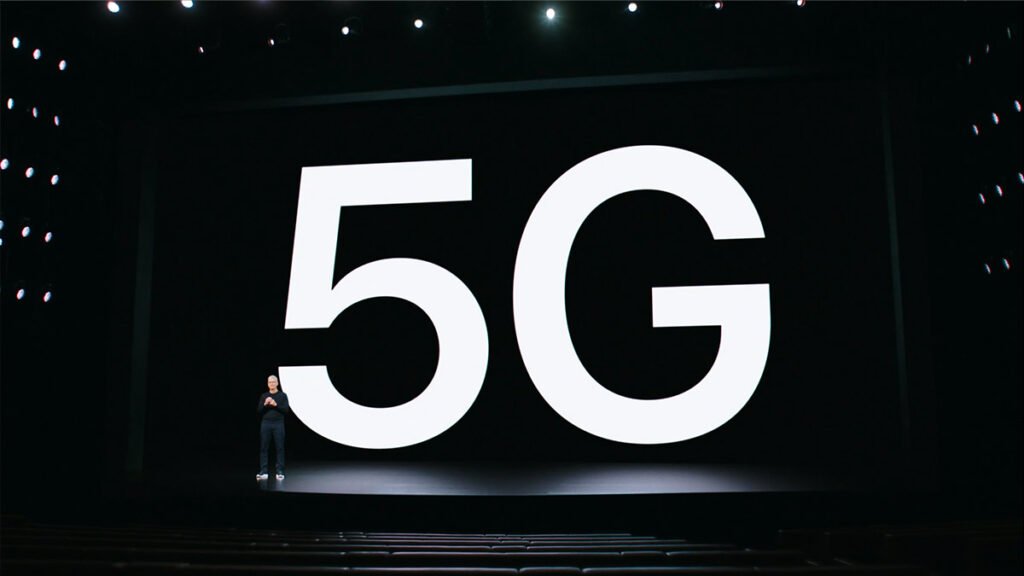

There are many specifications regarding the camera that have blown the competition out of the water, but we are more concerned with the 5G utilization and processing speed. With the growing interest in 5G coverage by the major telecom providers and smaller and faster chips being brought out by Apple, applications that integrate with IoT devices and run on ML algorithms can now perform at a much higher level. This means that previously impossible measurements, data transmissions, and real-time visibility are currently on the horizon for tech companies looking to bring their proof of concepts to market.

Every decade there’s a new generation of technology that provides a step change in what we can do with our iPhones. The next generation is here. Today is the beginning of a new era for iPhone. Today, we’re brining 5G to iPhone. This is a huge moment for all of us and we’re really excited.

Tim Cook, CEO

If you are looking to create a high-quality iPhone 12 app to be used by millions, you need the right developer to help bring your idea into the limelight. At Zco we are experts in mobile application development and can produce a premium Augmented Reality application that can best leverage Apple’s new iPhone 12 with LIDAR technology. We are however not just limited to iOS development; we also offer a whole suite of services that help make your life easier when it comes to custom software development and maintenance.